Slop is the perfect word to describe the rise in AI generated social media content. These platforms can churn out videos faster than any person can make them. The sacrifice for this overwhelming volume of content is connection and humanity.

The first wave of artificially generated “entertainment” was eerie. The characters moved incorrectly, stressed the wrong words and the dialogue was often scraped from some corner of the internet. It was essentially an amalgamation of human content, haphazardly wired together.

But AI is moving forward at a neck-breaking speed. On Sept. 30, OpenAI, the company that developed ChatGPT, launched Sora2. It’s an updated video generation model that is “more physically accurate, realistic, and more controllable than prior systems.”

While some videos still exhibit the stiff movements and glossed textures that reveal their AI origins, it’s undeniably an improvement over the previous system. Many of the videos Sora2 showcases on their website seem real.

However, this advancement comes at a cost. Sora2, like every other AI model, was trained on human-generated content, much of which was used without the creators’ consent. Early access users reported that the app was generating videos featuring copyrighted characters like Pikachu and Ronald McDonald, just weeks after another AI company, Anthropic, agreed to pay a $1.5 billion settlement over copyright infringement.

AI models can only improve by learning from human content, making it ironic that these apps aim to compete with the very creators they rely on. First, they allegedly use that content without permission to train the models, then use AI to mimic the creators’ work, essentially stealing from them twice. But legality is not the only concern with Sora2, the spread of misinformation is equally, if not more, troubling.

While there are ways to discern that videos created using Sora2 are AI-generated, a user casually scrolling on their ForYou page may not immediately realize it. Users should not be expected to scrutinize every video they see, checking for slightly unnatural qualities. Many might not realize they are interacting with fake content.

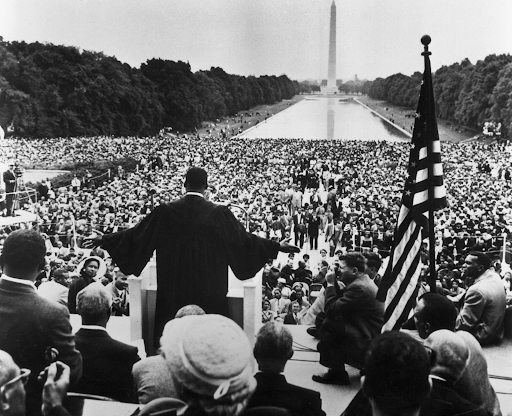

95% of Americans agree that misinformation is a problem. Deepfakes are an effective and convincing way of spreading it. Despite the consensus that deception is a threat, the federal government has placed little regulations on developers.

AI consumes extensive resources. It’s expected to consume 13.2% of Americans’ electricity by 2028, driving higher water use, emissions and e-waste. It’s disappointing that we are speeding up global warming just to generate more slop.

Human created content in any medium, even just short form social media videos, are more admirable than anything AI could generate. It’s in the knowledge that the creator devoted their time, resources and themselves to their content and in the attempt to connect to another human out there.